The transition from generative AI as a novelty to a functional component of the creative stack has been messy. For indie makers and prompt-first creators, the initial excitement of “text-to-anything” often hits a wall when it comes to repeatable, professional-grade output. We have moved past the era where a single prompt suffices for a campaign. Today, the focus is on the pipeline—the specific sequence of tools and refinements that transform a raw inference into a publishing-ready asset.

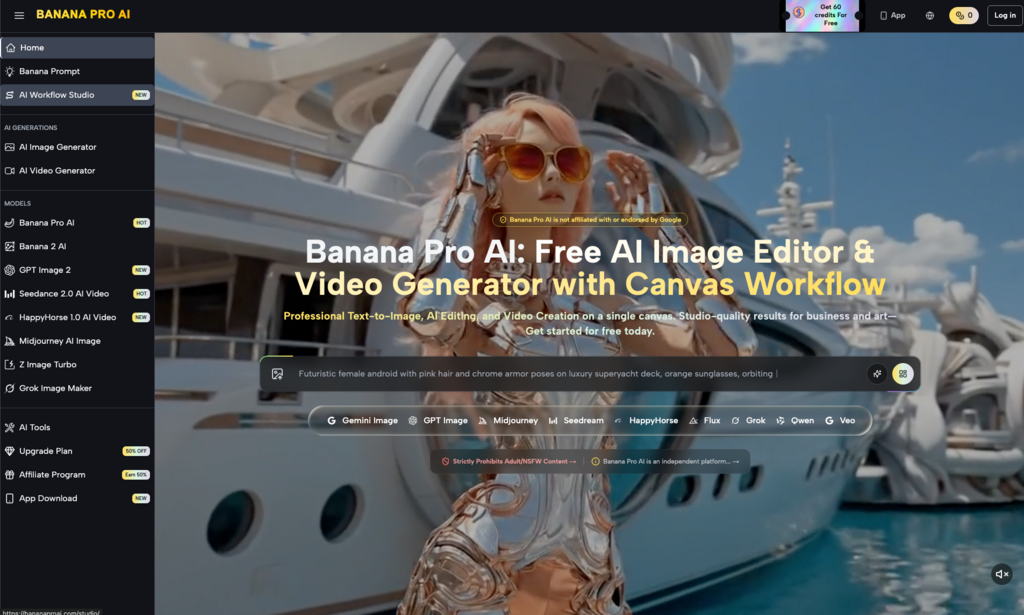

Within the current landscape, the ecosystem surrounding Nano Banana Pro offers a pragmatic lens through which we can view this shift. Instead of treating image and video generation as isolated events, effective operators are beginning to treat them as modular stages in a broader workflow. This requires a breakdown of use cases that prioritizes control over randomness, particularly when toggling between the speed of Nano Banana and the deeper, canvas-based controls of Banana Pro.

The Shift from Prompting to Direction

Early adoption of AI tools focused heavily on “prompt engineering.” However, for those building actual products or content streams, the prompt is merely the starting point. The real work happens in the iteration phase. This is where the distinction between different model weights and interface capabilities becomes critical.

When working within a high-velocity environment, an Nano Banana workflow is typically prioritized for rapid ideation. At this stage, the goal isn’t a finished product but a “proof of concept” for a visual direction. The latency between a concept and a visual anchor needs to be as low as possible. If a creator is trying to establish a mood board for a new landing page or a social media aesthetic, the speed of the Banana AI engine allows for a volume of iterations that would be cost-prohibitive in a traditional design environment.

However, a significant limitation remains: high-speed models often sacrifice fine-grained detail for throughput. Creators often find that while the composition is correct, the specific textures or anatomical accuracy might require a second pass. This is an essential expectation-reset: no single model currently solves for both extreme speed and perfect fidelity across all categories of imagery.

Managing the Canvas with Banana Pro

As a project moves from ideation to production, the requirements change. This is where Banana Pro shifts the focus toward a canvas-based environment. For an indie maker, the ability to manipulate an image spatially—rather than just regenerating the entire frame—is the difference between a tool and a toy.

The use case here is “Asset Refining.” When an image is 90% of the way there, but a specific element is distracting or incorrect, a standard text-to-image generator is often counter-productive. It might fix the hand but change the entire lighting scheme of the room. A dedicated AI Image Editor provides the surgical precision needed to modify specific regions. By using image-to-image workflows or in-painting within the canvas, creators can maintain the integrity of their initial vision while correcting the inevitable artifacts that come with generative models.

This “operator-led” approach is less about asking the AI for a miracle and more about using it as a highly capable assistant that follows specific spatial instructions. It moves the creator into the role of a creative director rather than just a person typing into a box.

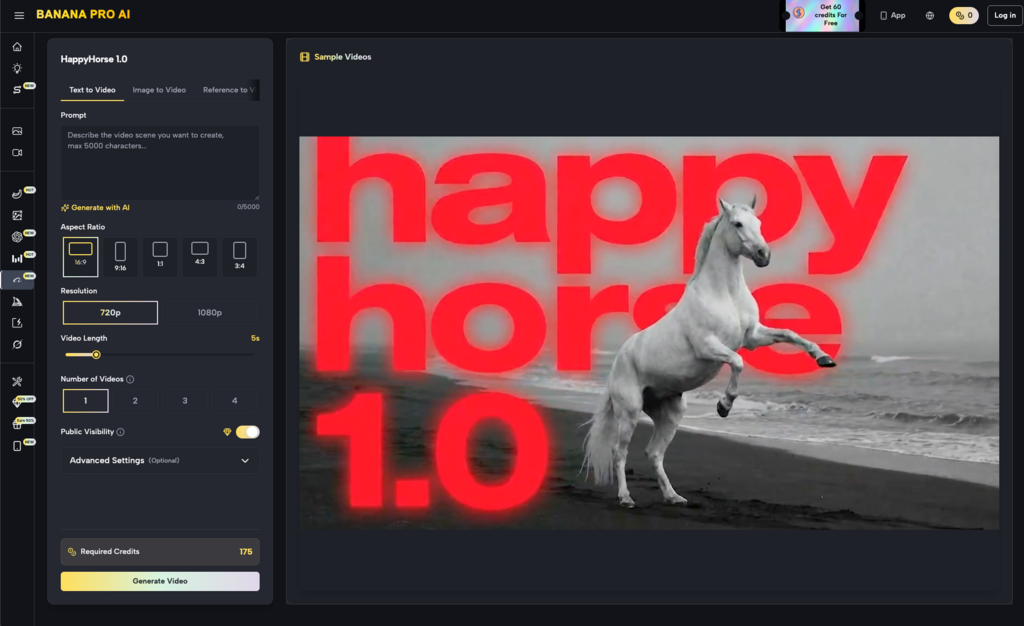

Video as a Derivative of Stills

The integration of video into the Nano Banana Pro framework represents one of the more complex use cases in modern publishing. The industry is currently grappling with the “consistency problem.” Generating a beautiful image is one thing; generating five seconds of motion that respects the physics, lighting, and character design of that image is another entirely.

For content teams, the most practical use case for AI video right now is not long-form storytelling, but “Atmospheric Enhancement.” This involves taking a high-quality still generated in the image suite and applying subtle motion—parallax, lighting shifts, or environmental effects.

It is important to be cautious here: AI video tools still struggle with complex human kinetics. If your use case requires a character to perform a specific, multi-step physical action like tying a shoe or playing a guitar, the current generation of tools will likely produce “uncanny valley” results. The most successful operators use video generation for backgrounds, abstract transitions, or product hero shots where the motion is fluid and simple rather than anatomically demanding.

Practical Publishing Workflows

To understand where these tools fit, we can break down a few standard publishing scenarios that indie makers face daily.

1. The Rapid Prototyping Cycle

For a product launch, a creator might need twenty different variations of a hero image to A/B test on a landing page. Using Banana AI, the process looks like this:

- Generate ten distinct concepts using a baseline model.

- Select the top three based on composition and brand alignment.

- Move those three into a high-fidelity environment to upscale and refine textures.

- Use the editor to swap out brand colors or specific product placements.

This workflow reduces the time from “idea” to “live test” from days to minutes, without the need for a full-scale photoshoot.

2. Social Media Content Clusters

Performance marketers often need to turn a single blog post into a “cluster” of visual assets—Instagram stories, Twitter cards, and LinkedIn headers. The consistency of Nano Banana Pro allows for a unified visual language across these different formats. By feeding a reference image back into the system, the creator ensures that the lighting and color palette remain stable across different aspect ratios.

However, a common pitfall is over-reliance on the “AI look.” Without manual intervention or the use of specific style filters, generative outputs can begin to look homogeneous. The best use case involves taking the AI output and layering it with traditional graphic design elements—typography, logos, and UI overlays—to ground the asset in a recognizable brand identity.

3. Indie Game and App Visuals

For developers, generating icons, UI elements, and background textures is a high-volume task that often distracts from core coding. The canvas workflow allows for the creation of seamless textures and sprites that can be directly exported into a development environment.

The uncertainty here lies in “style locking.” While you can prompt for a specific art style, maintaining that exact style across 50 different icons is challenging. Most professional workflows involve creating a “Style Reference” image first and then using that as an input for all subsequent generations to minimize drift.

The Reality of Constraints

No discussion of these tools is complete without acknowledging where they fail. One of the most persistent issues in the Banana AI ecosystem—and indeed across all generative platforms—is the handling of text within images. While models are improving, they are not yet reliable enough to generate complex signage or specific UI text without errors.

Furthermore, there is a “creativity ceiling” to consider. Generative tools are excellent at interpolating between known styles, but they struggle with genuine visual innovation. If you are trying to create a visual language that has never been seen before, you will likely spend more time fighting the model’s training data than you would just drawing it yourself.

The value proposition of these tools is efficiency in execution, not the replacement of original thought. They are amplifiers for existing ideas.

Integrating AI Without the “AI Feel”

The final stage of any professional use case is the “de-noising” of the creative process. This means removing the tell-tale signs of AI generation—the overly smooth skin, the nonsensical background details, and the saturated lighting.

An experienced operator uses the AI Image Editor to introduce “intentional imperfections.” This might involve adding grain, adjusting the depth of field to hide complex background geometry, or manually correcting a color grade that feels too digital.

By treating the output of Banana Pro as a “raw file” rather than a finished piece, creators can produce assets that stand up to professional scrutiny. This is the core of the modern AI publishing workflow: using the machine for the heavy lifting of generation, while the human focuses on the nuance of curation and finishing.

Ultimately, the breakdown of use cases shows that the tools are moving toward a more structured, pipeline-oriented future. Whether you are using the speed of the baseline models or the depth of the pro-tier canvas, the goal remains the same: reducing the friction between a creative concept and its digital manifestation. Success in this new era depends less on knowing how to prompt and more on knowing which tool in the kit is right for the specific task at hand.