The primary friction point in generative media today is not the generation itself; it is the drift. For indie makers and small teams, producing a single high-fidelity image is no longer the hurdle. The challenge lies in producing the eleventh, fiftieth, and hundredth image that all feel like they belong to the same visual ecosystem. When every generation is a gamble on lighting, texture, and color temperature, the workflow is not a pipeline—it is a slot machine.

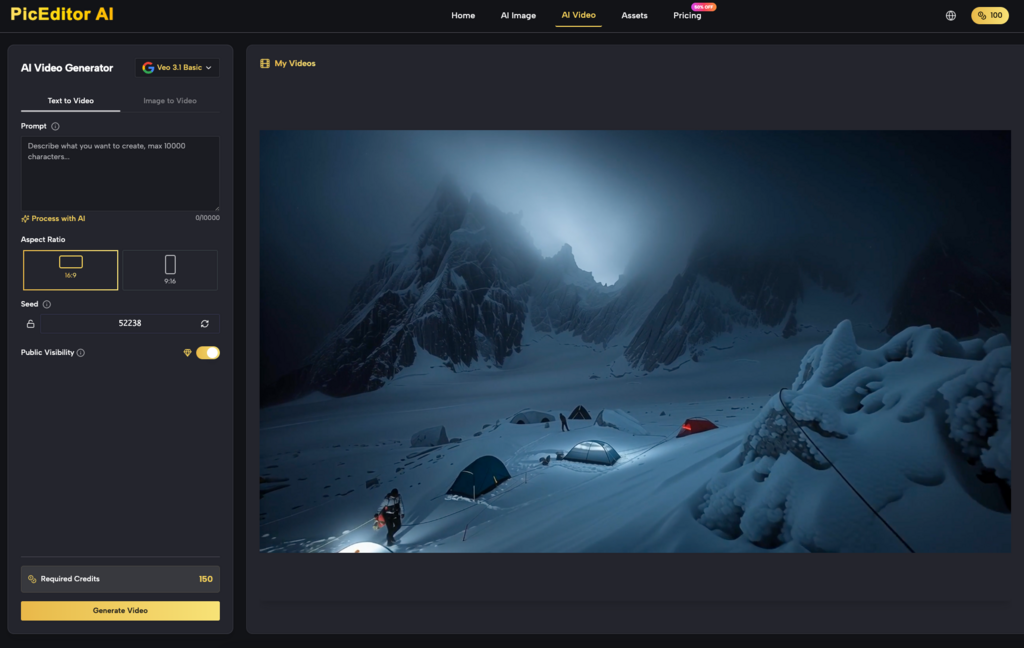

Bridging this “consistency gap” requires moving away from the “one-and-done” prompt mentality. It demands a shift toward a modular editing workflow where the initial generation is merely the raw material. To keep outputs usable in real campaigns, operators are increasingly relying on an integrated AI Photo Editor to enforce brand constraints that models inherently struggle to respect.

The Myth of the Perfect Prompt

In the early stages of the AI boom, the industry over-indexed on the power of the prompt. We were told that with enough descriptive modifiers, a model would eventually output a pixel-perfect brand asset. In practice, prompt engineering has a low ceiling for precision. You can ask for “minimalist corporate blue,” but the model’s interpretation of that blue will shift based on the surrounding context of the image—the lighting, the shadows, and the subjects.

This variation is fine for an experimental social post, but it is catastrophic for a product launch where color accuracy is non-negotiable. If your brand uses #0047AB, and your generator gives you #1A53FF because it “felt” better for the composition, the asset is technically unusable without manual intervention. This is where an AI Image Editor becomes a functional necessity rather than a luxury. It acts as the guardrail, allowing the creator to pull the AI back into the brand’s reality when it wanders off-course.

The Limitation of Latent Space

One of the biggest uncertainties in current AI workflows is the volatility of the latent space. Even when using fixed seeds and identical settings, minor changes to a prompt can cause a cascading effect on the image structure. We have to acknowledge that current models do not “understand” brand guidelines; they predict pixels based on probability.

This lack of semantic understanding means that things like logo placement, specific textile textures, or the exact curvature of a product’s silhouette are often “approximated.” If you are building a campaign for a brand with a highly specific visual identity, you cannot rely on the model to remember that identity from one generation to the next. You have to hard-code those standards into your post-production workflow.

Modular Workflows: From Generation to Correction

Effective teams have moved toward a three-stage workflow: base generation, structural correction, and aesthetic grading.

In the base generation phase, the goal is not perfection but “compositional viability.” You are looking for the right proportions and lighting. Once you have a base that works, you move into an AI Photo Editor to handle the structural failures. This might involve inpainting to fix distorted limbs or outpainting to expand a frame for specific ad dimensions.

The structural phase is where most time is lost. AI still struggles with spatial awareness—for example, placing a glass “on” a table versus “floating slightly above” it. Identifying these micro-errors early is the difference between an asset that looks professional and one that looks like a technical error.

Standardizing the Aesthetic Grade

The final stage is where brand consistency is either won or lost. Even if the subject matter is correct, a campaign will look disjointed if the contrast ratios and saturation levels vary.

Instead of trying to force the AI Image Editor to generate the perfect color profile, many creators find it more efficient to generate in a relatively neutral “RAW-style” profile and apply brand-specific LUTS (Look Up Tables) or color masks in post. This ensures that even if one generation was slightly warmer than the intended look, the final output across the entire campaign remains uniform.

This approach requires a certain level of technical restraint. It is tempting to use the most “vibrant” or “impressive” image the AI provides, but if that image doesn’t match the rest of the set, it must be discarded or heavily modified. Professional output is about the average quality of the set, not the peak quality of a single image.

The Challenge of Lighting Consistency

One specific area where certainty is low is the management of light sources. If you are generating a series of assets featuring the same product in different environments, the AI will frequently change the direction, temperature, and intensity of the light.

Current tools are still in their infancy when it comes to “locking” a light source across multiple generations. While some control nets and IP-adapters help, they often introduce their own artifacts. In a production environment, this means the creator must often manually balance shadows or use relighting tools within an AI Photo Editor to ensure that the product looks like it exists in the same physical universe across different ads.

Why Inpainting is the New “Layer”

For those of us who grew up in the era of non-destructive editing in Photoshop, the flattened nature of AI outputs can feel like a step backward. When an AI generates an image, the background and foreground are fused. If you want to change the color of a shirt, you often risk changing the skin tone of the person wearing it.

Inpainting has become the functional equivalent of the “adjustment layer.” By masking specific areas and rerunning the AI Image Editor on just those pixels, creators can achieve a level of granular control that mimics traditional editing. This allows for “versioning”—keeping the background constant while swapping out products, models, or seasonal elements.

However, we must reset expectations regarding the “one-click” nature of these tools. High-quality inpainting often requires multiple passes, denoising strength adjustments, and manual blending. It is not a magic wand; it is a surgical tool. If the denoising is too high, you lose the texture of the original; if it is too low, the change is imperceptible. Finding that “sweet spot” is a manual, iterative process that remains a bottleneck in the workflow.

Managing Technical Debt in AI Assets

Every time you “fix” an AI image with more AI, you run the risk of introducing technical debt—artifacts that might not be visible at web resolution but become glaringly obvious in print or high-resolution displays. This includes things like “over-sharpening” patterns, chromatic aberration in generated edges, or weird “soupiness” in background textures.

A production-ready workflow must include a high-resolution review phase. Operators should be looking for these artifacts at 100% or 200% zoom. Often, what looks like a perfect brand asset on a smartphone screen is actually a mess of algorithmic noise when scaled up for a website hero image. Having a reliable AI Photo Editor that handles upscaling and sharpening without introducing new hallucinations is critical here.

The Human Bottleneck

Despite the automation, the most important part of the pipeline is still the “human in the loop.” AI can generate 1,000 images in the time it takes a human to review ten. This creates a massive data glut. Teams that succeed aren’t the ones who generate the most; they are the ones who have the best filtering and curation standards.

Consistency is a human judgment. An AI can tell you if a pixel is blue, but it cannot tell you if that blue “feels” like your brand. It cannot judge if the facial expression of a generated model aligns with the company’s “approachable but professional” persona.

Building the Repeatable Pipeline

To move from “playing with AI” to “producing with AI,” teams need to document their settings with the same rigor they use for brand books. This includes:

- Fixed Seed Ranges: Using specific seed groups for different campaign “looks.”

- Negative Prompt Libraries: A standardized set of terms to prevent the AI from defaulting to its own generic aesthetic biases.

- Post-Processing Checklists: A mandatory list of edits—such as eye-catchlight correction, shadow grounding, and color grading—that every asset must go through in an AI Image Editor before being approved.

The “Consistency Gap” will likely narrow as models become more steerable, but it is unlikely to disappear entirely. Generative models are, by definition, stochastic systems. They thrive on variation. Professional design, however, thrives on predictability. The role of the creator in 2024 and beyond is to act as the bridge between those two opposing forces—using the AI Photo Editor not just to “create,” but to “curate” and “correct” until the machine’s output meets the brand’s standard.

Ultimately, the goal is a workflow where the AI does the heavy lifting of rendering, but the human retains the final say on the pixels. It is a partnership that requires both an understanding of what the machine can do and a healthy skepticism of its ability to do it perfectly every time. By hard-coding these standards into the workflow, indie makers and prompt-first creators can finally stop gambling on their outputs and start delivering consistent, high-value assets that actually work in the real world.